The Firebomber

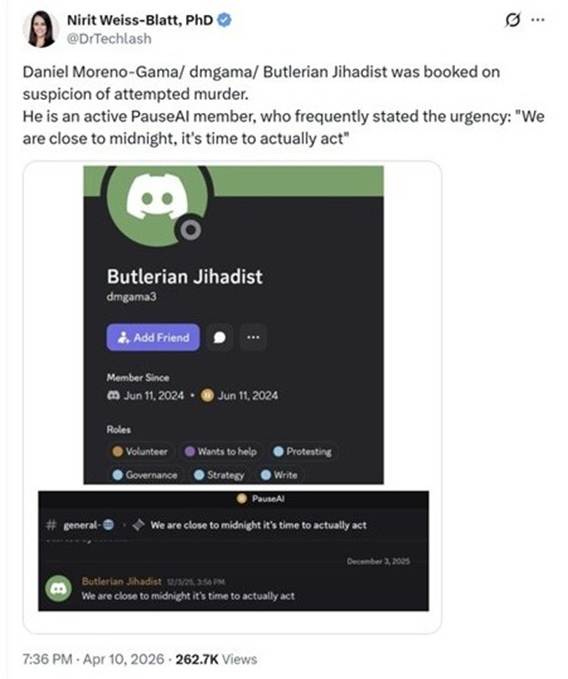

On December 3, 2025, Daniel Alejandro Moreno-Gama wrote in PauseAI’s Discord: “We are close to midnight, it’s time to actually act.” On April 10, 2026, he acted.

This is a developing story and will be updated. This piece has three parts: (1) The attack and the charges, (2) Social posts about AI doom, and (3) The reckoning. Each section will be updated with new information and analysis.

The Attack and the Charges

A 20-year-old suspect allegedly threw a firebomb at Sam Altman’s house on Friday night. The device was a Molotov cocktail (a bottle containing a flammable liquid and a burning rag). He then went to OpenAI’s headquarters and allegedly threatened to burn it down. He was taken into custody by San Francisco police outside of OpenAI’s offices. According to a criminal complaint filed Monday by the FBI, Daniel Moreno-Gama said he wanted to “kill anyone inside.”

At a Monday news conference, the FBI’s acting special agent in charge in San Francisco described the attack as “planned, targeted, and extremely serious.” He arrived in SF with a gun, a manifesto, and a hit list. The Department of Justice said Moreno-Gama possessed a three-part document he had written against AI. The first part, titled “Your Last Warning,” allegedly advocated killing CEOs of AI companies and their investors, and contained their addresses.

Moreno-Gama faces both state and federal charges, including attempted murder – both of Altman and the security guard who was at his house the night of the attack – and attempted arson on the state level. At the federal level, he faces charges related to an unregistered firearm and attempted damage and destruction of property by means of explosives.

“If the evidence shows that Mr. Moreno-Gama executed these attacks to change public policy or to coerce government or other officials, we will treat this as an act of domestic terrorism, and together with our partners, prosecute him to the fullest extent of the law,” said Craig Missakian, US Attorney for the Northern District of California.

The San Francisco District Attorney’s Office said it would seek to have Moreno-Gama held in custody without bail because of the “public safety risk he poses.”

As reported by The San Francisco Standard, “In a note to employees Friday, OpenAI said there was no immediate threat to staff or its offices but advised that there would be increased police and security presence at its Mission Bay campus.”

Social Posts About AI Doom

In a blog post reacting to the attack, Sam Altman wrote, “Words have power.”

Yes, they do. And as the evidence from Moreno-Gama’s posts suggests, this was a long radicalization process shaped by “AI existential risk” rhetoric.

For background, see this guide explaining the differences between StopAI, PauseAI, and ControlAI.

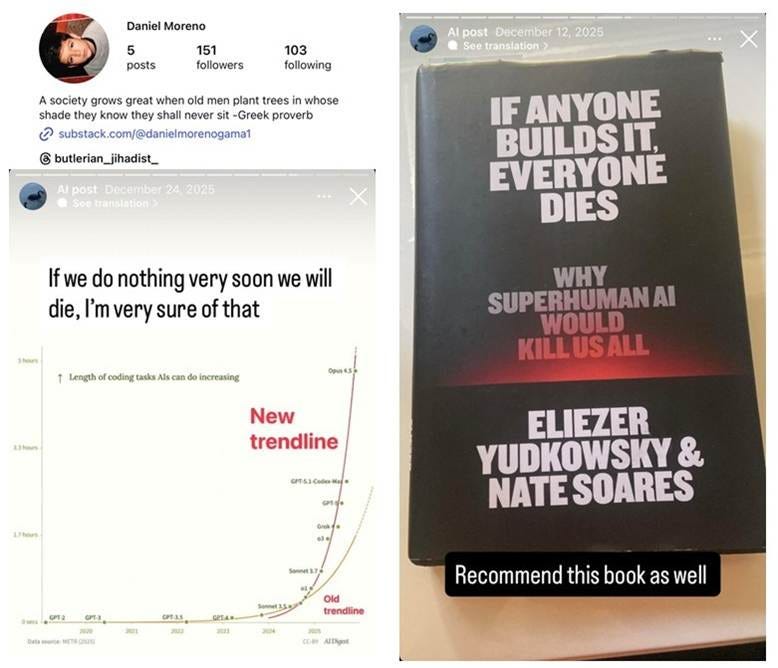

PauseAI Discord Server

On Friday night, after the suspect’s identity became public, I posted on Twitter that he had been an active member of PauseAI under the username dmgama/Butlerian Jihadist (both also used on his Instagram account). Butlerian Jihadist is inspired by the Dune series and the violent crusade against “thinking machines.”

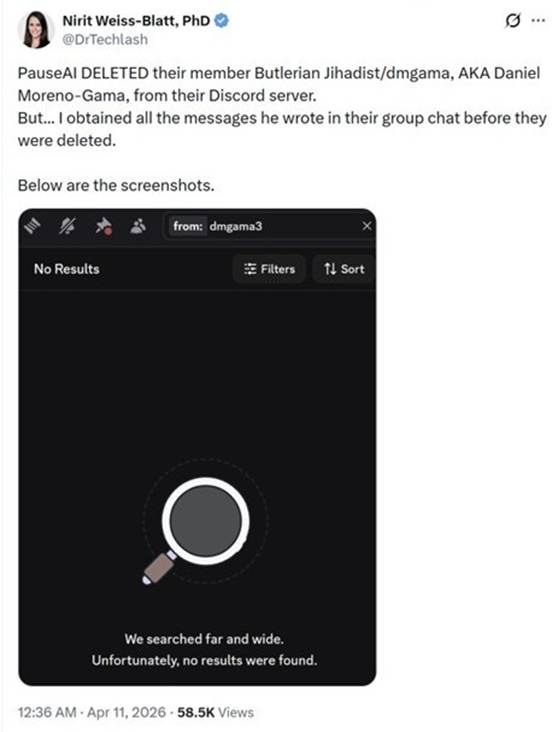

Shortly thereafter, PauseAI banned Moreno-Gama’s account. It removed his message history from the Discord server. Since I had already obtained the messages he posted before they were deleted, I published them online.

Here are some highlights from those screenshots, in chronological order:

June 11, 2024

Introduction: “I am very passionate about this issue and am willing to learn and help whatever means necessary.” “Is there any sort of timeline for how long humanity has left?” Moreno-Gama scheduled a meeting to get onboarded to the group.

June 16, 2024

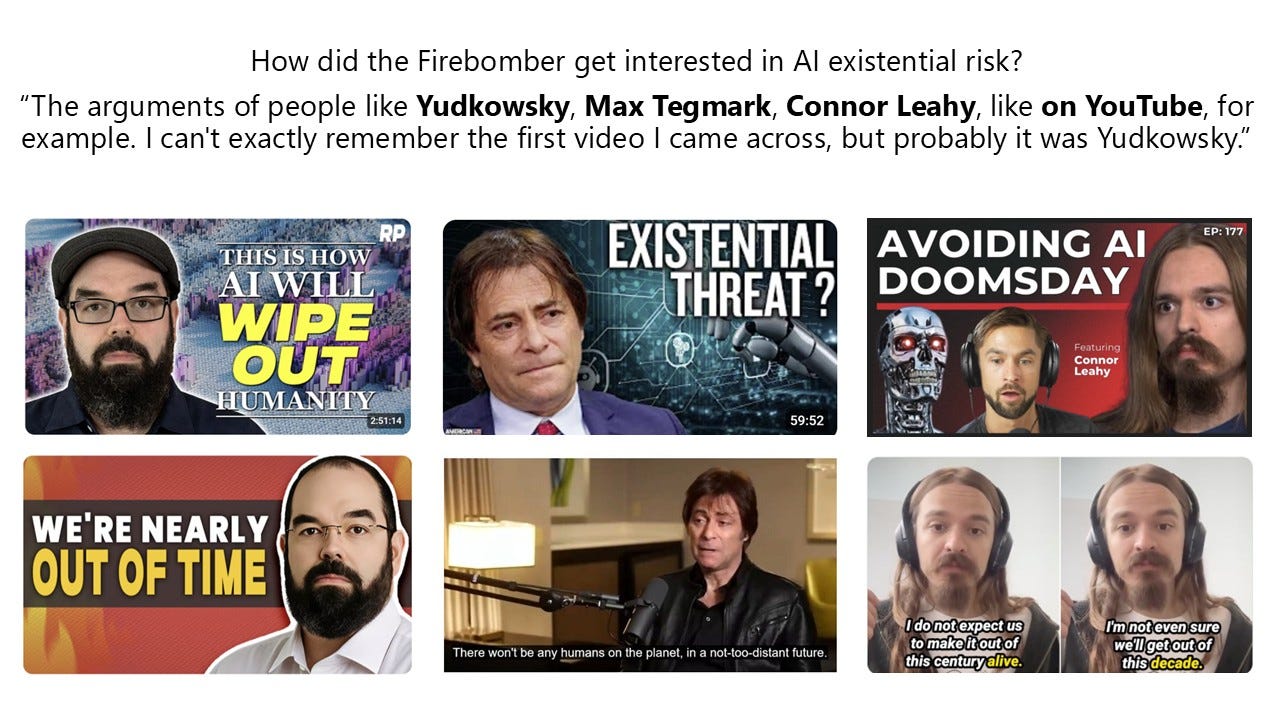

Moreno-Gama emailed Texas’s Congressman Dan Crenshaw. He congratulated Crenshaw on a 2023 interview between the Congressman and Eliezer Yudkowsky. He stated that he is not concerned about job loss but rather the “existential threat to our continued existence.”

November 5-6, 2025

“People will never feel as saliently about lesser AI risk as they will about a possible incoming extinction event (which we know is very likely, but they don’t believe it just yet). And we need that salience and passion for this movement to work.” “We owe it to everyone… to be stronger than that and at least die fighting.” “Time is against us, we need to start seeing results manifesting.”

December 3, 2025

“We are close to midnight, it’s time to actually act.” The moderator warned him: “Advocating violence in any form is grounds for a ban.” Daniel Moreno-Gama replied: “Do you really think we have any time left?”

January 8, 2026

Moreno-Gama got feedback from PauseAI members on his “AI Existential Risk” Substack post.

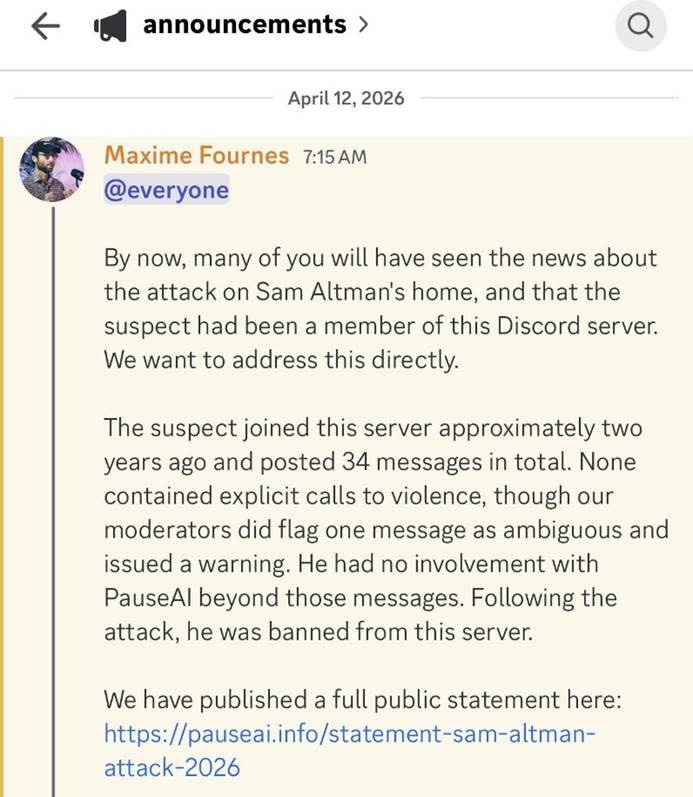

Two days after the attack, PauseAI CEO Maxime Fournes issued a statement to members of the group:

“The suspect joined this server approximately two years ago and posted 34 messages in total. None contained explicit calls to violence, though our moderators did flag one message as ambiguous and issued a warning. He had no involvement with PauseAI beyond those messages. Following the attack, he was banned from this server.”

StopAI

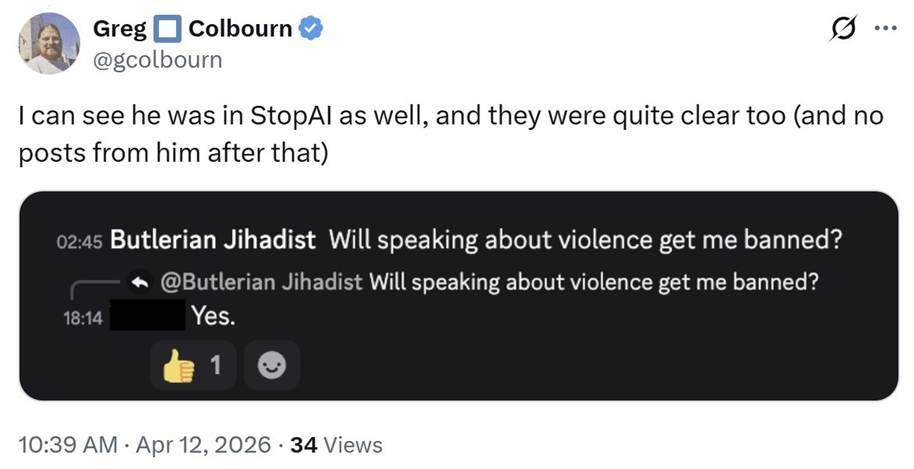

Moreno-Gama also appears to have been on the StopAI Discord server, where he displayed a troubling mindset.

StopAI’s recent statement adds:

“He joined a Stop AI public online forum, introduced himself, then asked, ‘Will speaking about violence get me banned?’ After he was given a firm ‘Yes,’ he ceased all activities on our forum. This was several months before his alleged criminal activities.”

One of the most common chants at StopAI’s protests outside OpenAI’s offices was: “Close OpenAI or We’re All Gonna Die!”

LessWrong

On LessWrong, the forum founded by Eliezer Yudkowsky, the moderator Jim Babcock (username jimrandomh) wrote that, “If Daniel Alejandro Moreno-Gama had a LessWrong account, then I, using my available tools as an admin and all publicly-reported usernames I’ve seen, cannot find it.” He emphasized that advocating violence “would also be grounds for a ban on LW.”

Oliver Habryka, who runs Lightcone Infrastructure and LessWrong, replied with a notable clarification: “Discussing or advocating violence is not banned.” He wrote:

“I think many people will encounter thoughts and ideas around whether violence is appropriate when they encounter the existential stakes of AI. Discussing whether those ideas are right or wrong is very much a thing I want LessWrong to be able to do. I think they are almost universally wrong, but people arriving at that conclusion will do so more likely with argument […] Discussing or advocating violence is not banned on LessWrong (though I would be surprised if it isn’t met with very consistent opposition in practically all cases).”

That position is striking, especially given LessWrong’s history of discussions about violence. In April 2025, I pointed out the following quotes:

“People like Eliezer [Yudkowsky] are annoyed when people suggest their rhetoric hints at violence against AI labs and researchers. But even if Eliezer & co don’t advocate violence, it does seem like violence is the logical conclusion of their worldview - so why is it a taboo?”

“If preventing the extinction of the human race doesn’t legitimize violence, what does?”

Moreno-Gama’s Steady Feed of Doomer Content

On the same Friday night, April 10, 2026, people began circulating screenshots of Moreno-Gama’s social media posts. As Alexander Campbell later summarized in “The ‘Rational’ Conclusion”:

“His Instagram was a feed of doomer content: capability curves captioned ‘if we do nothing very soon we will die,’ Venn diagrams placing us at the intersection of The Matrix, Terminator, and Idiocracy. Four months before the attack, he recommended Yudkowsky and Soares’ ‘If Anyone Builds It, Everyone Dies’ to his followers.”

Moreno-Gama also had a Substack, where he published six lengthy posts between January 6 and March 1, 2026. As Campbell summarizes:

“In January, he published ‘AI Existential Risk,’ estimating the probability of AI-caused extinction as ‘nearly certain.’ He called the technology ‘an active threat against anyone who is using it and especially towards the people building it.’ He concluded: ‘We must deal with the threat first and ask questions later.’ He wrote a poem imagining the children of AI developers dying, asking their parents why they did nothing. ‘May Hell be kind to such a vile creature,’ he wrote about the builders.”

The Reckoning

The attack should be a wake-up call about the effects of the doomer rhetoric.

In “Alleged Sam Altman Firebomber Wrote of Fears AI Would End Humanity,” I told the SF Chronicle reporter:

“When people are in a state of anxiety, and depressed, worried, and all they hear from the echo chamber is doom, they become more vulnerable, and become more radicalized, even if places like PauseAI or StopAI are not advocating for violence.”

“AI Doomers Built a Radical Ideology. Now Their Followers Are Acting On It,” writes Jordan Schachtel on his Dossier Substack.

“They have told a generation of anxious, hyper online young people that the most powerful companies in human history are building a machine that could end civilization. And now, when their followers are beginning to act on their incendiary rhetoric, they expressed shock and horror when some of those people don’t stop at signing an open letter.”

“And these ideas will almost certainly continue to indoctrinate and radicalize like-minded individuals into criminal action,” Schachtel warns.

To explain how the AI doomers produce their first Molotov cocktail, Alexander Campbell identifies three moving parts:

Start with certainty, for example, “If Anyone Builds It, Everyone Dies.”

Add a purity spiral, aka escalation. Within this community, members compete to demonstrate commitment by raising the stakes. P(doom) numbers climb from 50% to 90% to 99.99999%.

Then, cheap talk gets tested. Once the stakes are framed as existential, nearly any form of extremism can be rationalized.

As Campbell put it: “It was only a matter of time before someone took the framework at face value. They should stop acting surprised when their own logic shows up at 3:45 AM with a bottle full of gasoline.”

A Fortune magazine article by Sharon Goldman, “Pause AI and Stop AI: Meet the anti-AI groups facing questions after the attack on Sam Altman,“ features this observation (by yours truly):

“When prominent AI doomers like Eliezer Yudkowsky keep insisting that human extinction is imminent, it should not be surprising when someone is driven to extreme action. Young, anxious followers, looking for purpose, can be radicalized by apocalyptic AI rhetoric, even without explicit calls for violence.

The warning signs were there all along, including the November 2025 lockdown at OpenAI’s offices. The real question is how long the people fueling AI panic expect to avoid responsibility for where that radicalization leads, especially for the most vulnerable.”

Daniel Moreno-Gama, in an interview before he arrived in SF with a gun and a hit list:

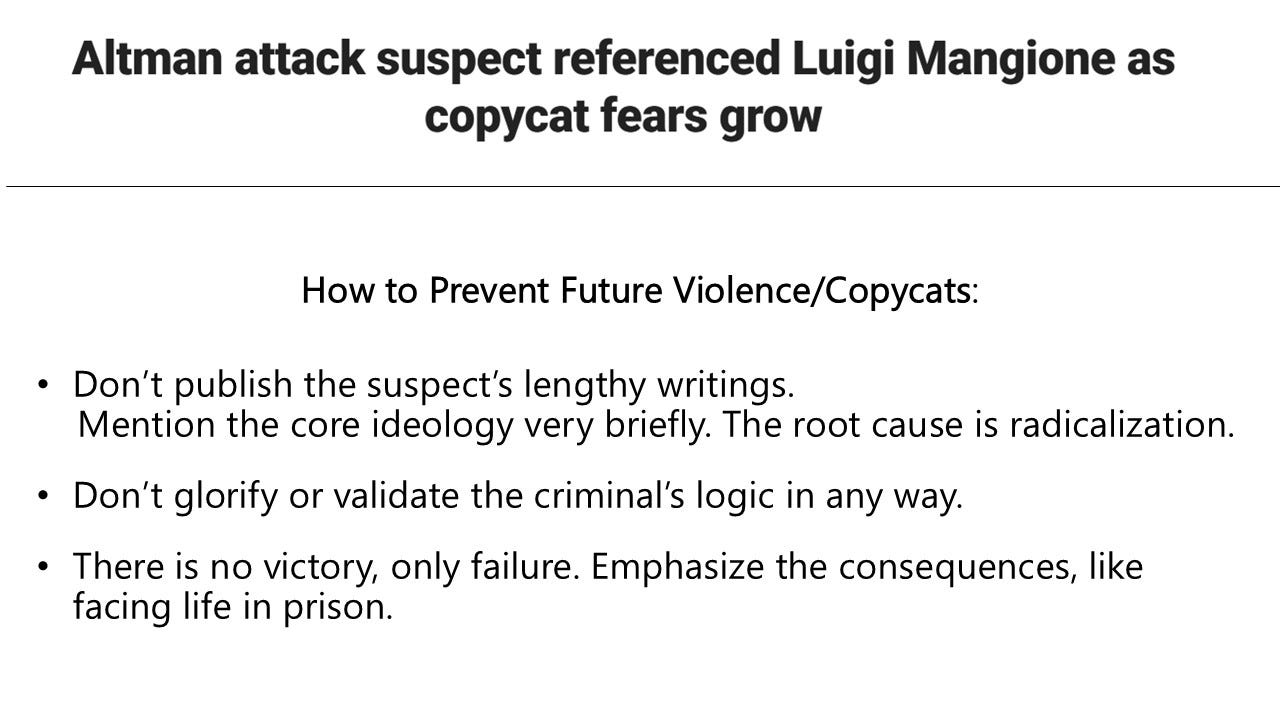

“The Last Invention” podcast episode with the Firebomber is irresponsible and dangerous, especially regarding the Contagion Effect.

Do not promote what the suspect has to say.

Criticize his radicalization from watching doom videos on YouTube to making a Molotov cocktail.

The Warning Signs Were There All Along

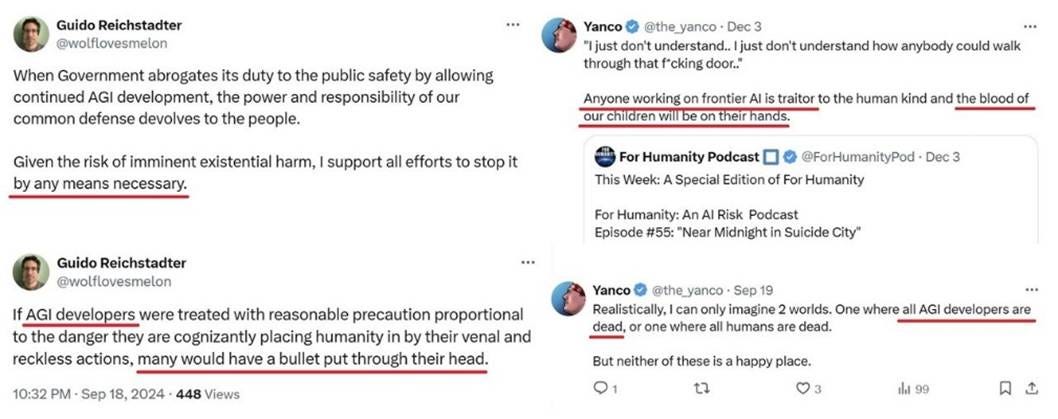

When Daniel Moreno-Gama joined StopAI and PauseAI, StopAI co-founder Guido Reichstadter (who later split from the group) and Yanco (who publicly helps with messaging) had already published incendiary rhetoric about AI developers:

When I published those screenshots at the end of 2024, I added:

“Both PauseAI and StopAI stated that they are non-violent movements that do not permit ‘even joking about violence.’ That’s a necessary clarification for their various followers. There is, however, a need for stronger condemnation, and law enforcement should monitor this radicalization closely. The murder of the UHC CEO showed us that it only takes one brainwashed individual to cross the line.”

In April 2025, I described the radicalization process:

“A major problem with the AI doomers’ mindset of ‘the end justifies the means’ is that all possible radical ‘solutions’ are on the table. Why stop with pervasive surveillance of software and hardware if the government can also surveil all known AI researchers?

This is how it plays out: They start with weird ideas like ‘Patent trolling to save the world,’ ‘don’t build AI or advance computer semiconductors for the next 50 years,’ or that ‘using an open-source model’ should lead to ‘20 years in jail.’ But, then, they end up playing with dangerous ideas about ‘AGI developers’ having ‘a bullet put through their head.’ ‘Walk to the labs across the country and burn them down. Like, literally. That is the proper reaction.’

This radicalization process can only lead to bad outcomes.”

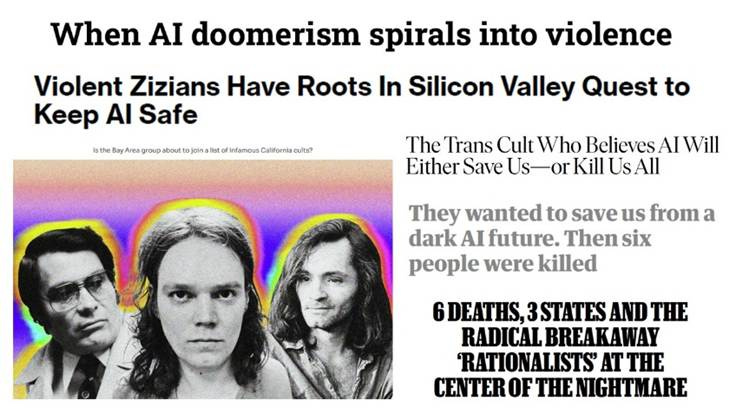

We also had the Zizians, a murder cult linked to six deaths across three states. I summarized that crazy story in “The Rationality Trap,” and it has been detailed in WIRED, New York magazine, and Rolling Stone. As the New York Times investigative reporter warned, “The story of the Zizians illustrates how concerns about AI safety, like any story involving apocalypse, can be used to make people do bad things.”

Then, in November 2025, StopAI co-founder Sam Kirchner abandoned his commitment to nonviolence, assaulted another member, and made statements that left the group worried he might obtain a weapon to use against AI researchers. Those threats prompted OpenAI to lock down its San Francisco offices.

In The Atlantic’s “The Strange Disappearance of an Anti-AI Activist,” we learned that Kirchner had said, “The nonviolence ship has sailed for me.” His whereabouts remain unknown.

In a “For Humanity” podcast episode, “Go to Jail to Stop AI” (#49, October 14, 2024), Sam Kirchner said:

“We don’t really care about our criminal records because if we’re going to be dead here pretty soon or if we hand over control, which will ensure our future extinction here in a few years, your criminal record doesn’t matter.”

After Kirchner’s disappearance, the podcast host and founder of “GuardRailNow” and the “AI Risk Network,” John Sherman, deleted this episode from Apple Podcasts, Spotify, and YouTube. Before it was removed, I downloaded the 1:14:14 video.

Sherman also produced an emotional documentary with StopAI and PauseAI titled “Near Midnight in Suicide City” (December 5, 2024, episode #55. See its trailer and promotion on the Effective Altruism Forum). That, too, was removed from podcast platforms and YouTube. I preserved a copy in my archive. Before it was taken down, it had gathered 60k views.

As I wrote on Techdirt, “We need to confront the social dynamics that turn abstract fears of technology into real-world threats against the people building it.”

And as City Journal concluded in “A Cofounder’s Disappearance—and the Warning Signs of Radicalization”:

“We should stay alert to the warning signs of radicalization: a disaffected young person, consumed by abstract risks, convinced of his own righteousness, and embedded in a community that keeps ratcheting up the moral stakes.”

That warning, issued in November 2025, reads even more clearly in April 2026.

Thank you for the research, analysis and reporting. So very glad you pulled this together. Please keep up the good work.

Excellent piece, thank you for putting all this together.

You have mentioned the ethical problem of whether to publish and analyze the manifesto. We certainly don't want copy cat attacks, but given that a lot of the things that can lead to radicalization (For instance, Yudkowsky's book IABIED) are already very public, isn't it better to analyze his writings in order to critique them at length to assuage other people with similar ideologies?

Your thoughts?