What 10 Studies Reveal About AI Panic in the Media

This article has two parts: First, a literature review of 10 studies on AI media coverage. Second, a media-criticism discussion of what those studies miss: The organized creator/influencer ecosystem now distributing AI panic beyond traditional journalism.

The media studies fall into three clusters: 1. They document the post-ChatGPT escalation of risk framing. 2. They examine the myths and science fiction narratives that supply the emotional charge. 3. They show how political and national ideologies shape whether AI is presented as a promise or a threat.

Together, these studies illuminate how AI panic is produced and amplified, especially in Western media environments.

Executive Summary

Literature Review

The post-ChatGPT studies document a sharp escalation in risk framing: coverage has shifted toward danger, alarm, anthropomorphism, and sensational headlines, all helping fuel a moral panic.

These narratives draw on familiar cultural scripts from science fiction and mythology: Frankenstein, Pandora’s Box, and The Terminator.

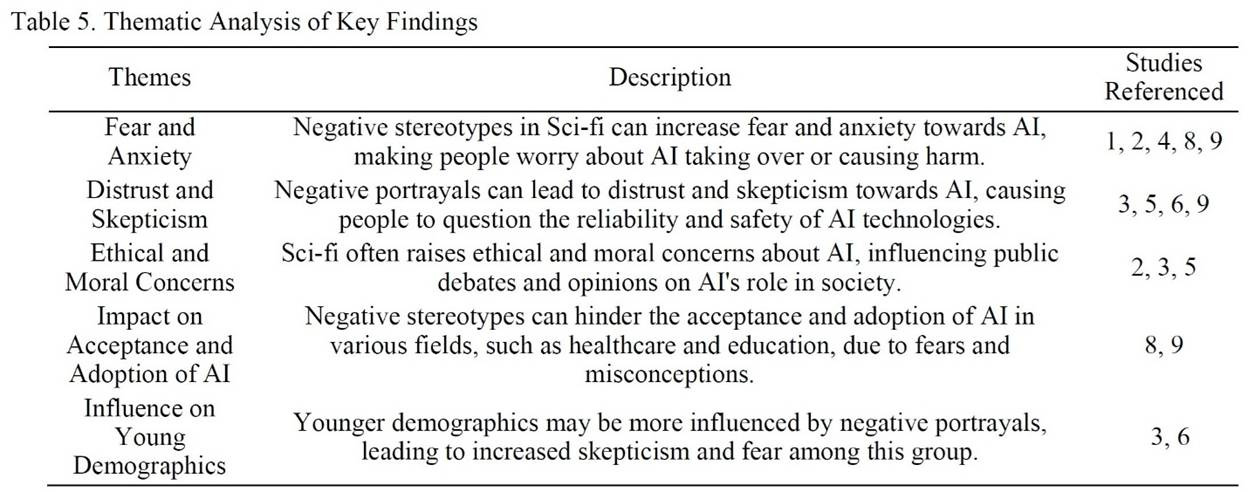

Disturbingly, the younger demographic was found to be more influenced by these negative portrayals, leading to increased fear among this group.

AI phobia is particularly evident in Western coverage. By contrast, Chinese coverage portrays AI in overwhelmingly positive terms: as a source of economic power, national competitiveness, and patriotic pride.

These studies do not argue that AI poses no risks. They claim that media panic is a poor guide to understanding them. They recommend interrogating who benefits from fear-mongering narratives and focusing on concrete, measurable harms.

What the Literature Misses

In the media-criticism section, I extend the analysis beyond traditional media to the broader AI-panic ecosystem: social media creators, influencer campaigns, and the strategic pivot from extinction rhetoric to near-term concerns, like jobs, children, and data centers.

The Rationale Behind This Review

This review is based on two canonical communication theories: agenda-setting and framing. According to these theories, the media plays a vital role in constructing social and political realities, and issue-specific frames in coverage can lead to additional societal effects.1 “Media discourse plays a crucial role in shaping the public’s attitudes and behaviors toward new technologies. Since individuals often rely on media to understand complex and unfamiliar technologies, news coverage significantly influences public perceptions of AI.”2

Technology journalists actively frame and mythologize AI, and those frames shape public opinion and the policy agenda. “Research has shown that media framing can strongly impact public attitudes and trust in AI. While positive portrayals can encourage AI acceptance and support, negative framing can fuel criticism and resistance.”3

Therefore, it is essential to examine how AI is framed in the media.

Since the release of ChatGPT in November 2022, the “existential risk” discourse has exploded. In my 2023 essay “What’s Wrong with AI Media Coverage & How to Fix it,” I argued that “The media thrives on fear-based content. It plays a crucial role in the self-reinforcing cycle of AI doomerism.”

Now, we have a growing body of research that helps document this phenomenon.

Out of this expanding corpus, this review highlights 10 studies: 1 from 2023, 2 from 2024, 5 from 2025, and 2 from 2026.

What the Academic Literature Shows

Post-ChatGPT Risk Amplification

1. ‘What They’re not Telling You About ChatGPT’: Exploring the Discourse of AI in UK News Media Headlines

UK headlines about ChatGPT were often sensationalized, with “Impending Danger” as the most common frame.

The researchers4 analyzed 671 UK news headlines about AI and ChatGPT published between January and May 2023. Using inductive thematic analysis, the study finds that media representations are frequently sensationalized and oriented toward warning readers about danger.

The dominant frame is “Impending Danger,” which accounts for 37% of the headlines. The second major category is “Explanation/Informative.” By contrast, “Only a minority of headlines were related to helpful, useful, or otherwise positive applications of AI, ChatGPT, and other Large Language Models (LLMs).”

The authors criticize this imbalance:

“The ‘Impending Danger’ theme leans in some cases toward sensationalism. This is particularly clear when complete destruction of society or ‘annihilation’ is presented as ‘just around the corner’. Although there is ample evidence that AI presents risks, spontaneous, imminent destruction of the world and/or society does not seem to be an accurate assessment of these risks at the present time.”

The paper’s broader warning is that this “demonstrated sensationalism” can foster “unnecessary anxiety and fear among the public.” The authors call for more “balanced, informed, and nuanced discussions on AI and its potential repercussions.”

2. How ChatGPT Changed the Media’s Narratives on AI: A Semi-Automated Narrative Analysis Through Frame Semantics

After ChatGPT’s launch, AI became more closely associated with danger, risk, and alarmist narratives.

The study compares the coverage six months before ChatGPT to the coverage six months after it. The authors5 find that sentences with AI as a source of danger approximately doubled. “The overall narrative has shifted from seemingly balanced to much more alarmist.” There was “a qualitative shift in the types of threat AI is thought to represent, as well as the anthropomorphic qualities ascribed to it.”

“Our analysis suggests that if the dangers and risks of AI were a prevalent theme before ChatGPT, the launch pushed it into an even more central focus,” the authors explain. “Both in terms of the number of articles mentioning it and the relative frequency with which it appears within these articles.”

The researchers conclude:

“Our study contributes to this debate by showing that the post-ChatGPT coverage shifted toward a more cautious and even alarmist stance. The relative proportion of the relevant frames grew significantly, suggesting dangers and risks taking a larger topic in the coverage.”

Overall, the study demonstrates “how media attention shifted toward risks associated with AI, as opposed to AI being a solution for other problems or playing another positive role.”

3. The Dystopian Imaginaries of ChatGPT: A Designed Cycle of Fear

ChatGPT coverage followed familiar “fear cycles,” turning the technology into a site of moral panic.

This article examines the fear-heavy media response to ChatGPT after its release. It argues that journalistic discourse quickly organized itself around recurring dystopian themes.

The study uses the concept of “moral panic.” “Fear is not simply a reaction to an objective threat, but a socially constructed phenomenon developed by media and public discourse.” The authors6 introduce the idea of “fear cycles” as “the recurring, fearful responses to emergent technologies,” which “help develop and maintain pessimistic visions of the future we view as dystopian imaginaries.”

This study places the AI panic in a historical context. It shows that ChatGPT coverage followed a familiar pattern:

“Analyzing current dystopian imaginaries around ChatGPT highlights a deeper understanding of what we term ‘fear cycles’ – recurring responses to emergent technologies characterized by negative predictions, emotions, and narratives. Such ‘fear cycles’ are not unique to AI but are part of a broader pattern in the discursive reaction to new technologies.”

“Journalists largely rely on common fears to amplify a dystopian narrative while at times developing unique anxieties attributed to artificial intelligence,” the authors note. “This understanding further raises awareness of how the public might be predisposed to certain dystopian tropes that consistently circulate within the media.”

4. The Rise of Artificial Intelligence Phobia! Unveiling News-Driven Spread of AI Fear Sentiment Using ML, NLP, and LLMs

Large-scale headline analysis found persistent fear-laden language that portrays AI as an existential threat.

The study analyzed nearly 70,000 AI-related news headlines and used NLP, ML, and LLM-based classification. The researchers7 ask how often AI is “positioned as a fear target.” Their findings point to the persistent use of fear-laden language that portrays AI as dangerous, unreliable, or an existential threat.

The broader implication is that such alarmist coverage can distort public understanding, discourage engagement with AI, and shape policy in overly restrictive ways:

“The findings show a persistent presence of emotionally negative and fear-laden language in AI news coverage. This portrayal of AI as dangerous to humans or as an existential threat profoundly shapes public perception, fueling AI phobia that leads to behavioral resistance toward AI, which is ultimately detrimental to the science of AI.”

5. Artificial Intelligence in the Public Debate: Risk Amplifiers and Mitigators in Media Discourse

Spanish media coverage centered heavily on “risks to civilization and humanity.”

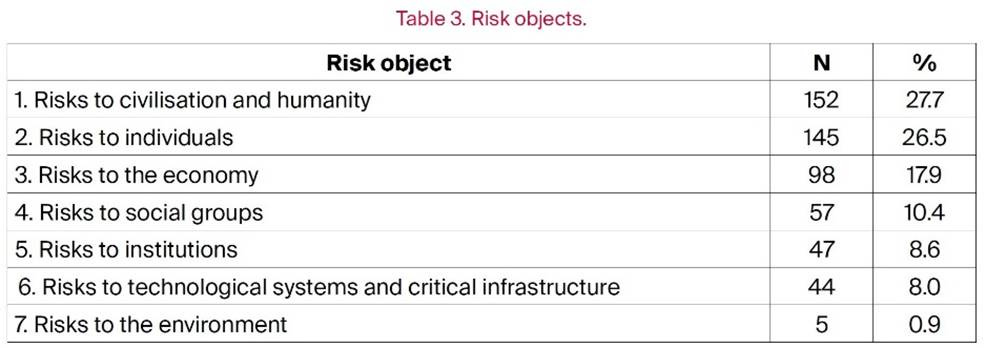

This article analyzed 2,705 journalistic texts from six Spanish newspapers during the first year after ChatGPT’s launch. The authors8 developed a taxonomy of seven risk categories. The study finds that the most common risks in the coverage were “risks to civilization and humanity” and “risks to individuals.”

The most prevalent discourse (27.7%) concerns risks to civilization and humanity. These risks have the potential to cause severe damage or even threaten the continuity of human civilization. The authors argue that “The media’s focus on existential risks” may “contribute to disproportionately negative perceptions.”

The second-largest category is risks to individuals, at 26.5%. These risks directly affect people, whether personally, psychologically, or in terms of their fundamental rights. In particular, loss of privacy and behavioral manipulation through algorithmic systems.

Economic risks are the third-most significant risk group, at 17.9%. These include fears about job automation, rising inequality, and potential financial bubbles.

The study also examines who gets to speak in the debate. It finds that the dominant voices were media organizations, regulators, and companies, while civil society was underrepresented.

6. From Moral Panic to Pragmatic Governance: Reframing AI’s Societal Impacts in Employment, Education, and Ethics

The AI debate is trapped between alarmist moral panic and complacent reassurance.

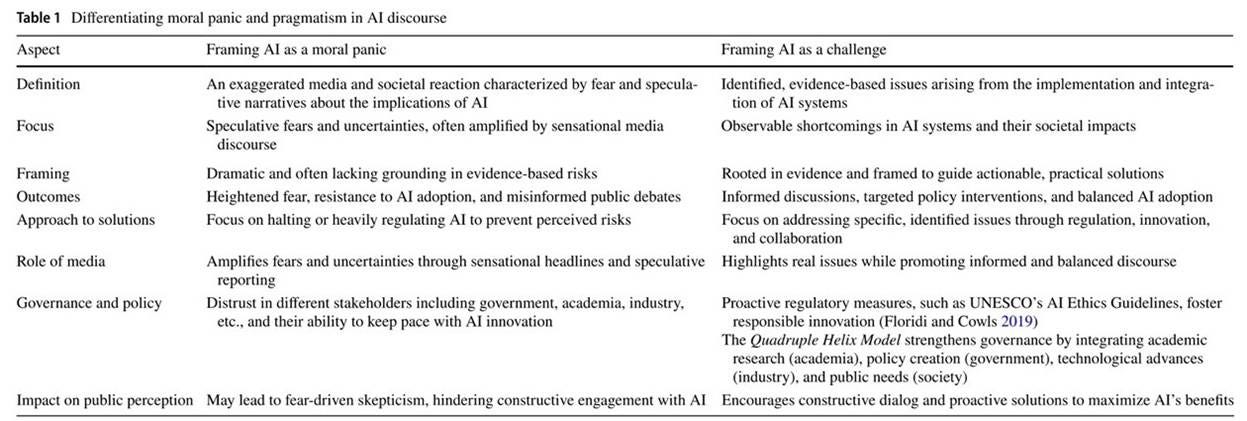

This study argues that public debates about AI “oscillate between alarm and reassurance,” and that “this pattern aligns with classic analyses of moral panic”: “episodes of amplified public anxiety in which new technologies are cast as threats to social order through cycles of claims-making, media escalation, and calls for control.”

The authors9 distinguish between moral panic and pragmatism. “Moral panic refers to a discourse pattern characterized by disproportionate threat construction and volatile amplification, whereas pragmatism denotes a program for measurable risk remediation.”

Their core argument is that framing AI as a civilization-ending threat may capture attention, but it often pulls the discussion toward symbolic reactions instead of practical governance. Thus, the authors argue, the AI debate should move from panic headlines to a pragmatic risk-governance approach: less speculative doom, more measurable failures, and more cross-sector coordination that can steer AI toward public benefit.

Mythological Frameworks and Science Fiction

7. Myth and Symbols in the Artificial Intelligence (AI) Media Discourse. The New Myths of Modernity: AI – An Interpretive Study

AI media discourse relies heavily on mythological and archetypal frames.

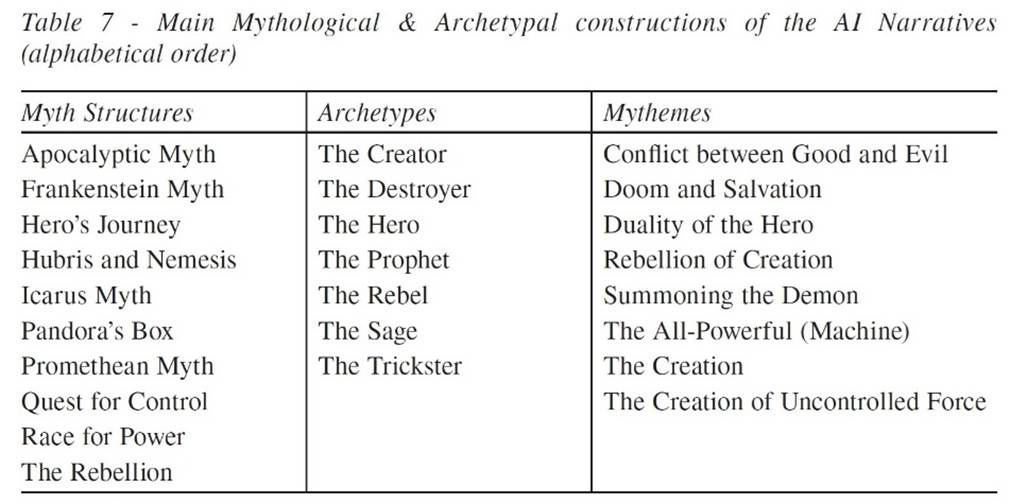

The study examines how “media discourse about AI is prone to reveal archetypal, mythological structures,” and how the concept of AI in media discourse “has the characteristic features of a modern myth.”

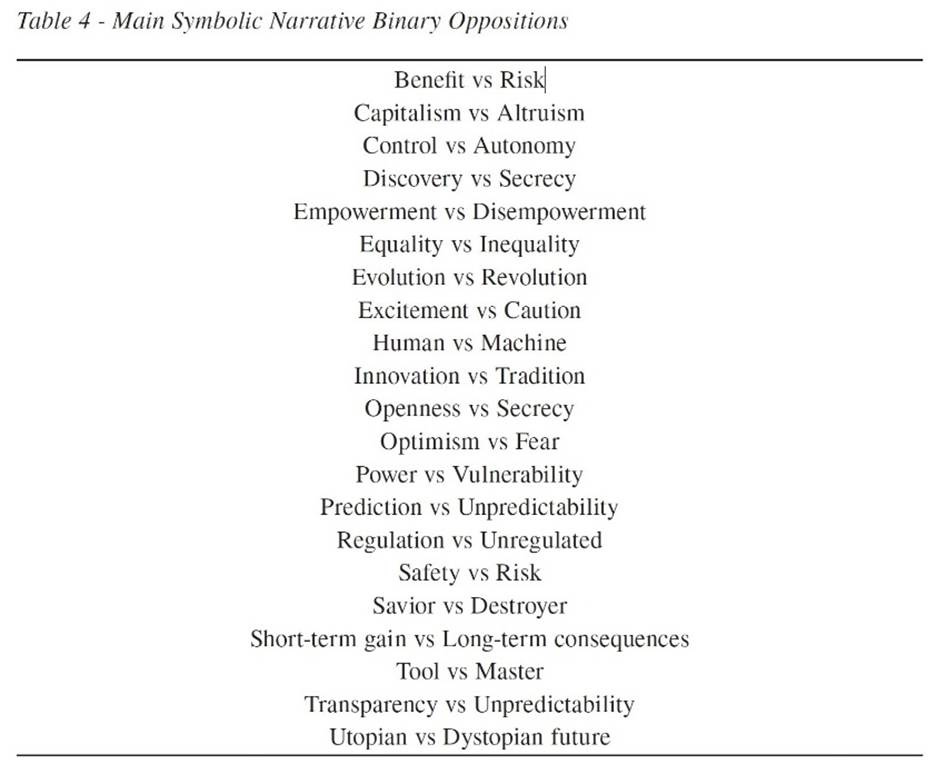

The author10 observes that “Almost without exception, studies that examine artificial intelligence from various perspectives note the controversial nature associated with this subject.” In particular, the discourse “oscillates between optimism regarding AI as a solution to various deficiencies and needs in society, and pessimism in which AI is seen as a threat to humanity in general.”

The study breaks down the AI narratives “into their constituent elements, identifying recurring patterns and symbolic relationships.” Among them are the binary oppositions of Utopian vs Dystopian future, Human vs Machine, Savior vs Destroyer, and Tool vs Master.

The author notes that although “the narratives tend to present both positive and negative attributes,” “the balance tends to often go in favor of the negatively.”

“Our analysis confirmed the deep mythological, archetypal substrate of the AI media narratives. Our findings showed that journalists, consciously and unconsciously, appeal to metaphor and mythological and archetypal allegories to explain the AI reality. The corpus revealed direct mentions of the ‘Promethean’ myth, the ‘Pandora’s Box’ myth, the ‘Apocalyptic’ myth, the ‘Icarus’ myth, the ‘Frankenstein’ myth in several areas, regardless of the characteristics of the media outlet that generated the narrative.”

“By means of such symbolic constructions, archetypes and myths, AI acquires meaning within the collective imaginary,” the author concludes, “which will directly influence its further social acceptance.”

8. The Influence of Negative Stereotypes in Science Fiction and Fantasy on Public Perceptions of Artificial Intelligence: A Systematic Review

Negative sci-fi and fantasy portrayals of AI increase fear, anxiety, distrust, and skepticism toward real-world AI technologies.

This systematic review examines how negative portrayals of AI in science fiction and fantasy shape public attitudes. Looking across a set of studies published between 2011 and 2023, the authors11 conclude that (1) “Negative stereotypes in Sci-fi significantly contribute to increased fear and anxiety toward AI,” and (2) “Negative portrayals of AI also lead to increased distrust and skepticism toward AI technologies.”

The systematic review clarifies:

“Sci-fi has long been a breeding ground for exploring new ideas and concepts, with AI being a recurring theme in these genres. However, the portrayal of AI in popular media has often been marred by negative stereotypes that can influence public perceptions of this technology. One common stereotype in Sci-fi is the idea of AI as a malevolent force that seeks to destroy humanity. Films like ‘The Terminator’ and ‘The Matrix’ depict AI as a threat to civilization, instilling fear of a future where machines become self-aware and turn against their creators. These dystopian visions of AI have contributed to a general sense of unease and suspicion toward the technology.”

One of its interesting claims is that these cultural images especially affect younger audiences and public willingness to adopt AI in real-world settings:

“The findings indicate that negative portrayals in these genres significantly increase fear and anxiety toward AI, leading to heightened skepticism and ethical concerns. Moreover, these negative stereotypes hinder the acceptance of AI in various fields, particularly affecting younger demographics more profoundly.”

“However, positive portrayals can mitigate these negative attitudes, enhancing familiarity and acceptance,” the authors add. “The complex relationship between media portrayals and attitudes toward AI underscores the need for balanced and accurate representations to foster a more informed and accepting public perspective on AI technologies.”

Political and National Ideology in AI Coverage

9. Does the Media’s Partisanship Influence News Coverage on Artificial Intelligence Issues?

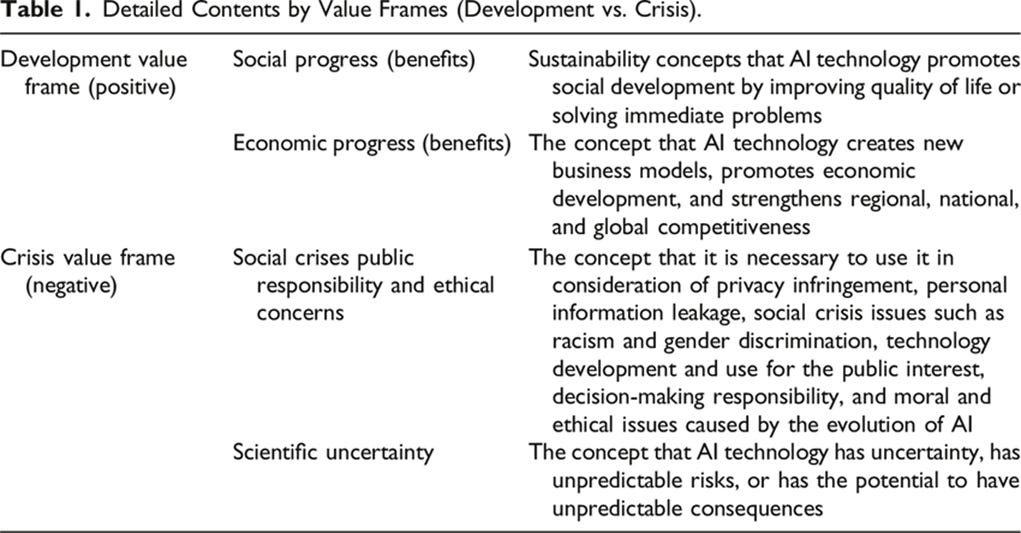

Conservative outlets tended to emphasize AI’s benefits and development value, while progressive outlets focused more on crisis frames, ethics, side effects, and regulation.

This study examines how political orientation shapes AI-related news in South Korea. The author12 finds that conservative and progressive outlets frame AI differently:

“The analysis found that conservative media coverage predominantly focuses on positive aspects, emphasizing development value frames such as the benefits and societal progress brought by AI. In contrast, progressive media often highlight crisis value frames, focusing on issues like side effects, ethical concerns, and legislation surrounding AI.”

The broader takeaway is that AI coverage is not ideologically neutral. Political orientation affects whether the technology is presented mainly as a promise or as a risk.

10. Of Pride and Patriotism: The Representation of Artificial Intelligence in Chinese Official and Media Discourse

Chinese AI coverage is overwhelmingly positive, linking AI development to national strength, global competitiveness, and patriotic pride.

This study examines how AI is represented in Chinese official and media discourse. The author13 notes that “Compared to the wealth of work on AI narratives in Western media, relatively few studies have analyzed how AI is represented in Chinese news.” The central finding is that “AI in China is predominantly framed in positive terms.”

“China’s achievements in the sector and AI’s fundamental role for the construction of a powerful country are widely highlighted, legitimizing the party-state’s approach and stimulating a strong sense of national pride and patriotism.”

“A generally positive portrayal of AI applications and impacts legitimizes the CPC’s push for its development in the country,” the author observes.

“Risks are somehow minimized by only mentioning them as something to be anticipated and dealt with. The CPC-state is portrayed as responsible and committed to seizing every opportunity to make China stronger, but also wise and competent about the risks to be addressed.”

“Since artificial intelligence is presented as vital to China’s international competitiveness, dropping out of the global AI race is simply not an option,” the author concludes. “Its desirability is instilled by narratives that link its development to the restoration of China’s greatness.”

The contrast here is revealing. While the Western coverage amplifies AI panic, Chinese coverage asks how AI can strengthen the nation. It is a radically different AI story.

Discussion

The studies reviewed here focus mostly on traditional media headlines and journalistic frames. But the AI panic ecosystem no longer runs only through legacy media, newspapers, and magazines. It now travels through YouTube channels, influencer campaigns, podcasts, nonprofit-funded creator programs, and viral short-form content.

Much of this ecosystem is being optimized for attention and persuasion. That helps explain why some messaging strategies have moved from extinction fears to more emotionally immediate concerns.

Building on the literature review, this section examines that broader ecosystem.

Content Creators on YouTube

The AI panic has moved from media framing to organized content distribution.

Recently, the Washington Post reported that AI-doom organizations are paying and promoting content creators to push “AI existential risk” messages to the public through “sponsoring social media posts and partnering with influencers.”

Influencers with tens of millions of subscribers are being recruited to preach doom. “A single paid YouTube collaboration last year scored 1.6 million views, and ControlAI also sponsored an episode of Green’s popular SciShow that netted 1.8 million views.”

“Most AI experts in academia and industry say there’s no scientific support for claims of imminent danger to the entire species, arguing that the doomsday forecasts overestimate existing technology and under-appreciate the complexity of the real world,” writes Nitasha Tiku of the Washington Post. “But AI safety groups moving to sign up content creators believe the blistering pace of AI development should make the public more receptive to their predictions.”

As of May 1, 2026, the following AI doom YouTube channels had gathered 108 million views:

Species | Documenting AGI = 31,883,680 views.

It features videos like “It Begins: An AI Literally Attempted Murder To Avoid Shutdown,” which gathered 10M views and is overwhelmingly misleading.

Rational Animations = 30,582,611 views.

It features videos like “Everything might change forever this century (or we’ll go extinct),” which gathered 1.9M views.

AI In Context = 15,744,555 views.

It features videos like “We’re Not Ready for Superintelligence,” which gathered 10M views, or one promoting the book “If Anyone Builds It, Everyone Dies” (by Eliezer Yudkowsky and Nate Soares), which gathered 1.4M views.

The AI Risk Network = 10,515,099 views.

It has videos like “Is AI Actually Alive?”

Siliconversations = 9,576,119 views.

It features videos like “AI Chatbots Could Kill Us All.” This channel also produced a video for the Future of Life Institute’s report – “Scientists Graded AI Companies On Safety… It Went Badly.” The Future of Life Institute promoted this video on social media, and guess what? Siliconversations is funded by the Future of Life Institute.

DoomDebates = 4,533,732 views.

It features videos like “How It Ends: The Most Likely AI Doom Scenario.”

The Inside View = 4,035,664 views.

It features videos like “I Went On A Hunger Strike Outside Google To Stop The AI Race.”

Lethal Intelligence = 1,127,347 views.

It features videos like “The Ultimate Intro to Existential Risk from upcoming AI.”

New attempts to shape public opinion are appearing with growing frequency. “Last year, the Foresight Institute ran an existential meme competition, and January saw the launch of the Frame Fellowship, an eight-week AI safety creator program from Effective Altruism,” reported The San Francisco Standard in “Aella launches AI doom creator residency in Berkeley.” In April, Aella launched “Plz Don’t Kill Us” to create viral content about the existential threats posed by AI. Her new program “will offer residencies to up to 100 creators, who will be asked to post daily short-form content about ‘AI doom.’ Food and housing in Berkeley will be provided.” Aella told the reporter that “We have funding from the Survival & Flourishing Fund and the Machine Intelligence Research Institute.”

Notably, according to the Washington Post, the Future of Life Institute “has funded 30 projects to develop AI safety content since launching a digital media accelerator last year.” “The application form on the nonprofit’s website says it plans to spend $100,000 a month.”

The Doomers’ Pivot

A recent study finds that, despite years of effort in traditional and social media, the “human extinction” narrative has failed to resonate with the public. It has not mobilized the public as doomers had hoped: “Of all the AI harms/risks … people are least concerned about x-risk.” In addition, “existential/extinction risk was mentioned by very few people, and even when it was, several wrote of their skepticism of this risk being a real one.”

People are far more concerned about job loss, autonomous weapons, children and mental health, and environmental harms. That finding helps explain the messaging pivot now underway. Some AI policy advocates have moved from the “AI will kill us all” stance to “meet people where they are.” The goal is not to abandon the extinction frame, but to route it through concerns that feel more immediate and politically usable.

This trend is evident in a recent “For Humanity” podcast with Philip Trippenbach, strategy director at the Seismic Foundation, which is “raising the salience of AI risk through targeted, professional communications.”

In this “How to Talk About AI Risk Without Scaring People Away” episode, Trippenbach and John Sherman discussed how to move beyond abstract existential risks and address “mundane” AI harms, such as impacts on jobs and children, to “meet people where they are.” From the episode description: “AI extinction searches are being eclipsed by AI jobs, AI and children, and AI suicide”; “Why ‘this isn’t fair’ may be a more powerful message than ‘we’re all going to die’”; “The case for creating friction across many AI harms as a path to slowing things down.”

Vitalik Buterin, founder of Ethereum, whose SHIB donation became central to the Future of Life Institute’s funding, said recently, “I’m actually pretty open-minded about the anti-data-center populism” as a way to “lengthen AGI timelines.”

I’ve addressed this mindset in “The Rationality Trap”: “The world-ending stakes accelerated the ‘ends-justify-the-means’ reasoning.”

Technological Determinism Versus Social Determinism

The studies reviewed here also reveal a deeper pattern: much of the AI coverage is technologically deterministic.

In the TECHLASH book, I discussed this framework:

“Under technological determinism, some argue that technology is deterministic: the state of technological advancement is the determining factor in society.

Others dispute that view, claiming the opposite [social determinism], that it is society that affects technology (social forces shape and design technology).

Under technological neutrality, some say technology is neutral, meaning its effects depend entirely on the social context.

Others defend the opposite: they view the effects of technology as being inevitable, regardless of the society in which it is used.”

The studies above point to technological determinism. AI is portrayed as an autonomous force driving society in negative directions. Less attention is paid to AI’s benefits and to the many social factors shaping it.

My own view is closer to social determinism, as demonstrated in my recent “AI and Human Resilience” piece. Or, more precisely, to mutual shaping: More often, we see that emerging technologies and social actions are mutually dependent. In the case of AI, it influences society, but societies also shape its development and adoption.

Final Remark

The through line across these studies is the realization that the fight over AI is a fight over narrative: Who gets to define what AI is and what it can do?

For too long, AI coverage has been trapped between binary narratives of utopia vs. dystopia. Here’s a recommendation: let’s listen to the people who refute that those are the only two options and who offer a realistic middle case grounded in better evidence. Our AI discourse desperately needs it.

Endnotes

“Social cognitive theory and cultivation theory [also] provide frameworks for understanding how repeated media exposure shapes audience perceptions. These theories suggest that consistent portrayals of AI as malevolent or dangerous in Sci-fi reinforce negative stereotypes, thereby influencing public attitudes.” (Bo, Ma’rof, and Zaremohzzabieh, 2025).

Quoted from the paper “Perceptions and paradigms: An analysis of AI framing in trending social media news” by Ruolan Deng and Saifuddin Ahmed, 2025.

Also quoted from Deng & Ahmed, 2025.

Brent Lucia, Matthew Vetter, and Varshil Patel. 2025. Convergence: The International Journal of Research into New Media Technologies. Link.

Jim Samuel, Tanya Khanna, Julia Esguerra, Srinivasaraghavan Sundar, Alexander Pelaez, and Soumitra S. Bhuyan. 2025. IEEE Access. Link.

Berta García-Orosa, Cristian Augusto González-Arias, Tania Forja-Pena, and Beatriz Gutiérrez-Caneda. 2026. Studies on the Journalistic Message. Link.

Lonel Barbalau. 2025. Chapter #1 in the book: Artificial Intelligence and Human Perception: Media Discourse and Public Opinion, edited by Emma Lupano and Paolo Orrù. Link.